Before and After OpenClaw: How AI Market Outlook Shifted in 8 Weeks (feat. Lex Fridman Podcast vs. Noh Jungseok YouTube)

Introduction

I watched two videos about AI agents in Q1 this year. Similar timing, overlapping topics, but noticeably different perspectives. One was from early February, the other from late March. Only 8 weeks apart, yet the temperature gap was striking enough to warrant a cross-analysis.

In Lex Fridman Podcast #490, published February 1st, AI2’s Nathan Lambert assessed computer use like this:

"Computer use — Claude can use your computer, OpenAI had Operator,

and they all suck."

— Nathan Lambert, Lex Fridman Podcast #490Seven weeks later on March 21st, Korean AI entrepreneur Noh Jungseok returned from the OpenClaw Seoul meetup with a very different reaction — “That’s the future of work right there.” He’d just watched agents order chicken on Baemin and hail taxis on KakaoTaxi.

Same quarter, same topic. Where does this temperature gap come from? There was one event between the two videos: OpenClaw.

Before: “They All Suck” (February 1, 2026)

Lex Fridman Podcast #490 is a 4-hour-25-minute state-of-AI roundup. Sebastian Raschka (ML researcher, author of Build a LLM from Scratch) and Nathan Lambert (AI2 post-training lead, RLHF expert) joined as guests.

The Assessment of Computer Use

When agents and computer use came up, Nathan was quite direct:

"Computer use is a good example of what labs care about and

we haven't seen a lot of progress on. We saw multiple demos

in 2025 — Claude can use your computer, OpenAI had Operator

— and they all suck."

— Nathan Lambert (03:17:37)Sebastian echoed the sentiment. The problem isn’t just model capability — it’s the interface for how users communicate tasks to agents.

"For arbitrary tasks, you still have to specify what you want

your LLM to do. You can say what the end goal is, but if it

can't solve the end goal... how do you, as a user, guide the

model before it can even attempt that?"

— Sebastian Raschka (03:18:10)You ask it to book a trip and it enters the wrong credit card info. Fixing that requires human re-intervention. The interface for that intervention? Not designed yet.

”Jagged AI” — Uneven Capabilities

Nathan introduced a framework in this episode: Jagged AI.

AI is already superhuman in certain domains — frontend coding, traditional ML pipeline construction, text summarization. But in areas like distributed ML training system design, where training data is sparse, it’s still lacking. The interesting part: as models get stronger, this gap doesn’t shrink — it widens. What it’s good at gets better, what it’s bad at stays bad due to lack of training data.

"I think the camp that I fall into is that AI is so-called

'jagged,' which will be excellent at some things and really

bad at some things."

— Nathan Lambert (03:25:00)Nathan was also skeptical of the AI27 report’s milestones — superhuman coder → AI researcher → ASI. “Superhuman coder” presupposes completeness, but completeness is nearly impossible for a jagged AI. His view: humans will remain in the loop for a long time, filling gaps and moving fast alongside AI.

”That Dream Is Dying”

One of Nathan’s more striking statements:

"That dream is actually kind of dying...

the 'one model to rule everything' ethos."

— Nathan Lambert (03:25:56)A single general-purpose model that codes, writes emails, books travel, and does scientific research. That’s unlikely to materialize. Sebastian framed it as “Amplification, not paradigm shift.” Everything gets amplified, but it’s not a paradigm change.

As of February 1st, these two researchers shared a common view — agents are premature, computer use is demo-grade, and the era of general-purpose agents may be further away than expected.

What Happened In Between: OpenClaw

Just before the Lex episode went live, OpenClaw entered the world.

It started in November 2025, when Austrian developer Peter Steinberger connected an LLM to Telegram “in roughly one hour” on a Friday night — Clawdbot. After a trademark dispute with Anthropic, it was renamed to OpenClaw on January 30th. The Lex episode was published two days later on February 1st. When Nathan and Sebastian recorded, OpenClaw had just gotten its current name and hadn’t yet gone viral.

The explosion came after. Within weeks it became the fastest-growing open-source project in GitHub history, and on March 22nd a major update (v2026.3.22) landed with 45 new features and 82 bug fixes.

But what’s worth noting about OpenClaw isn’t the code itself — it’s the shift in how the problem was framed.

The questions Nathan and Sebastian grappled with were:

- Can AI use computers like humans from scratch?

- Can it handle logins, screen recognition, navigation, and error recovery?

- Can it receive arbitrary tasks in natural language and execute them end-to-end?

In February, the answer was clearly “not yet.” And that assessment was correct.

OpenClaw asked a different question: “What if the agent operates in an environment where humans have already logged in?”

Apps already authenticated on an emulator. Existing human credentials and sessions. Use APIs where available, CUA (Computer Use Agent) for screen manipulation where not. The agent doesn’t need to solve everything from scratch — it repeats defined flows on top of a human-configured environment.

This was less a technological breakthrough and more a reframing of the problem.

After: “That’s the Future of Work” (March 21, 2026)

Noh Jungseok is CEO of Scionic/KYYB and one of the most active AI experimenters in the Korean AI community. His YouTube EP91 was recorded on Saturday morning, March 21st — the day after attending the OpenClaw Seoul meetup.

OMO.BOT — An Agent App Prototype

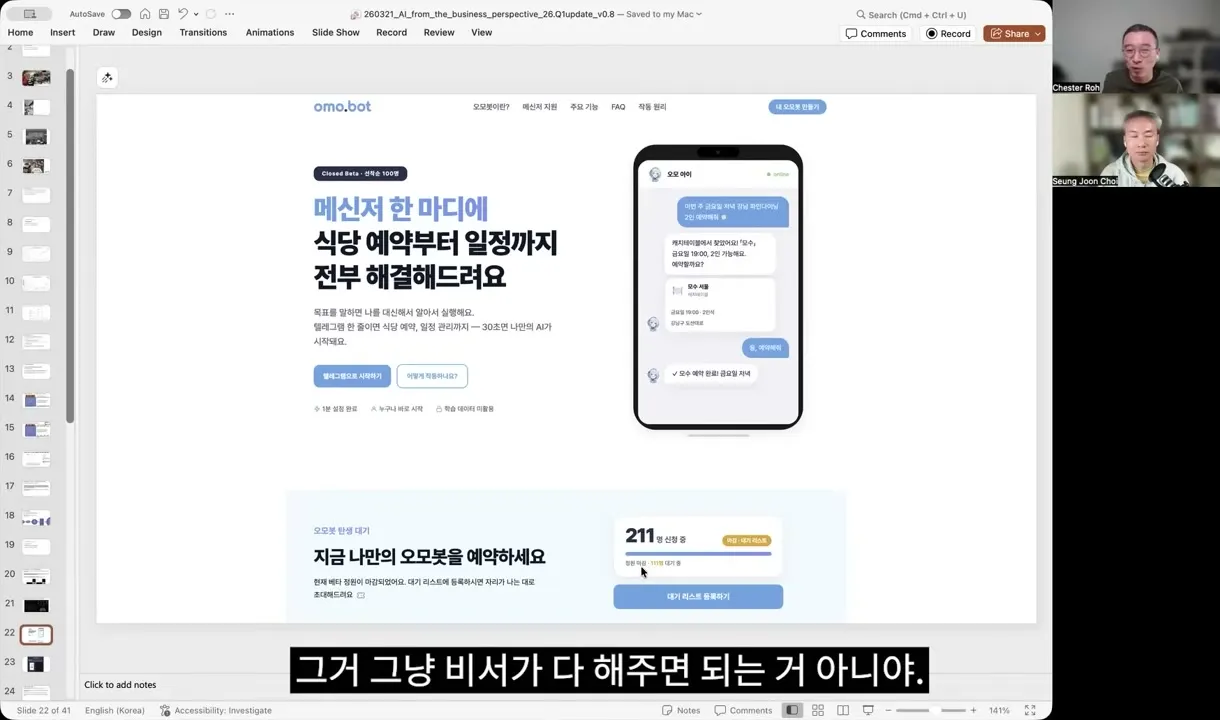

A demo by developer Simon left a strong impression — an agent app called OMO.BOT.

Want to order food? Open Baemin. Need water? Open Coupang. Need a taxi? Open KakaoTaxi. Each requires a different app. Why not just tell an assistant? OMO.BOT implemented exactly that. Services with APIs connect via API; those without use CUA. All through a single interface.

”They Become Another Agent’s Function Call”

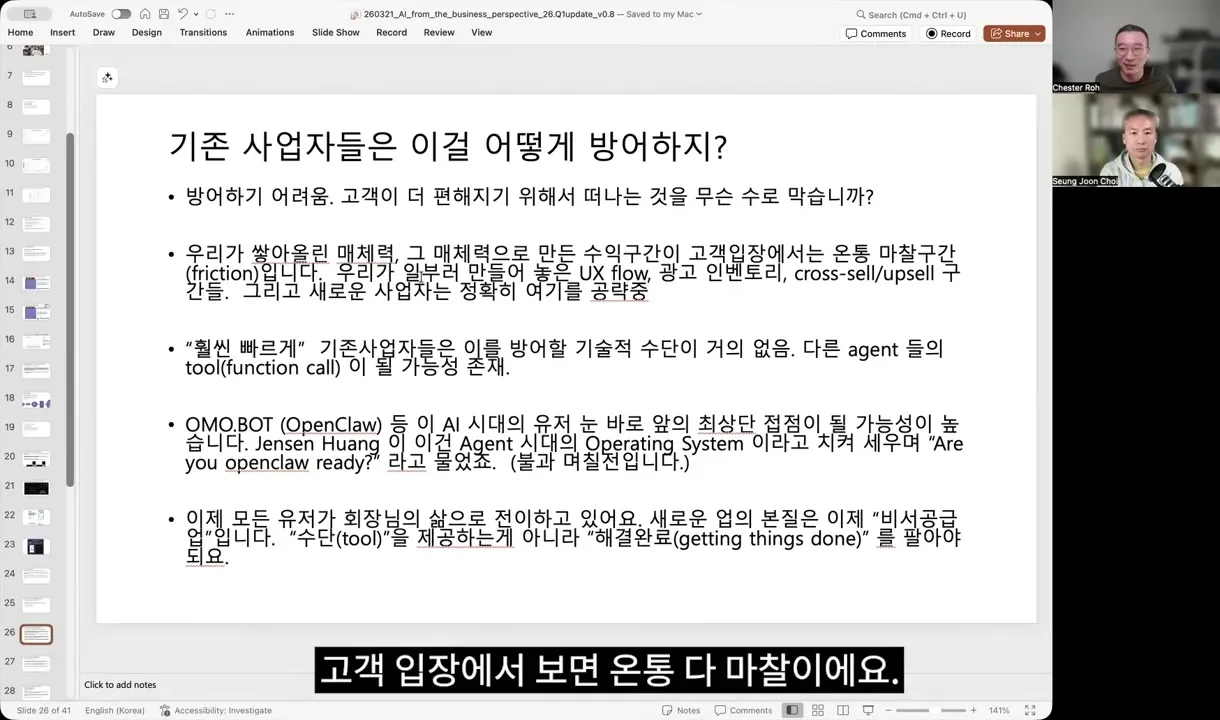

Noh Jungseok read a business structural shift in this demo:

"Existing businesses will very likely become just another

agent's function call. They can't stop it."

— Noh Jungseok (47:10)Baemin, Coupang, KakaoTaxi — what they’ve built is intermediation power between users and suppliers. Ads in the UX, cross-sell, forced navigation flows. That’s where their margins come from. Agents bypass all that friction, and from the user’s perspective, there’s less reason to go back.

His argument is straightforward:

"If I log into my emulator and my agent operates that

emulator, how do you block it?"

— Noh Jungseok (48:22)The Computer Use limitations Nathan called “suck” — login failures, screen recognition errors — largely disappear in the OpenClaw paradigm. Authentication is already handled by humans; agents just repeat specific flows on top of that.

GTC’s Confirmation

At NVIDIA GTC 2026 (March 16th), Jensen Huang called OpenClaw “the next ChatGPT” and an “operating system for personal AI.” Two months after launch, it was being name-dropped in NVIDIA’s keynote. NVIDIA also announced NemoClaw, an enterprise version.

Noh Jungseok summarized the trend with a question — “Are you OpenClaw ready?” Every business needs to answer it now.

What Changed

Looking at the temperature gap between the two videos, it felt less about differences in technical capability and more about differences in problem framing.

Lab-driven vs Community-driven

What the Lex guests evaluated were lab-built, top-down solutions. Anthropic’s Claude Computer Use, OpenAI’s Operator. A single model trying to handle screen recognition, login, navigation, and error recovery end-to-end. That approach genuinely didn’t work well.

OpenClaw was a community-built, bottom-up assembly. The model doesn’t need to do everything alone. Humans set up the environment; agents operate within it. API plugins, CUA modules, and harnesses get rapidly assembled by the community.

This pattern has recurred throughout tech history. IBM mainframes (top-down) vs the PC assembly ecosystem (bottom-up). Apple’s closed ecosystem vs Android’s open ecosystem. When technology matures sufficiently, community-driven assembly overtakes lab-driven solutions.

Bypassing the Specification Problem

Sebastian raised a key issue: “How do you communicate arbitrary tasks to agents?” For complex tasks like booking travel, the user has to specify all conditions before the model can even attempt execution.

In the OpenClaw ecosystem, this problem is solved differently. Instead of a generic “do anything,” specific flows for specific services are built as harnesses. “Order chicken on Baemin” has defined steps; agents follow them. The specification is already embedded in the harness.

This aligns with a conclusion Noh Jungseok reached independently:

"Clean data connectors, good prompts, and a frontier model

— that's what performs best."

— Noh Jungseok (58:24)His company tried every AI framework over four years — Pydantic, LangChain, agent SDKs, LoRA, custom models — and landed here. Sebastian’s emphasis on spec-driven design in the Lex episode is essentially the same insight: most AI coding failures stem not from model limitations but from inadequate human specifications.

Both sides pointed to the same conclusion: models are already strong enough; the bottleneck is how we connect models to the real world. In February, that connection method wasn’t visible. By March, OpenClaw had demonstrated one answer.

Questions That Remain

OpenClaw didn’t solve everything. Several concerns from the Lex episode remain valid.

Security and Prompt Injection

Noh Jungseok was candid about this:

"I didn't dare install OpenClaw on my laptop. I set up a VM

with a fresh Linux install to test it, and I have it running

on an external DGX box."

— Noh Jungseok (01:11:16)Social and financial credentials still can’t be delegated safely. As agent autonomy increases, so does the risk of prompt injection causing unintended behavior. Co-host Choi Seungjun also noted the vulnerability — agents can be injected, and even 2FA can be compromised.

Jagged AI Still Holds

Nathan’s “jagged AI” framework remains valid post-OpenClaw. What OpenClaw bypassed was the access and authentication problem, not the model’s inherent unevenness. Following defined flows works well, but judgment in edge cases can still be unreliable.

Noh Jungseok has a different time horizon on this, though. He cited Claude Code creator Boris Cherny:

"The most general one is the most specific one.

If it can't solve it now, shelve it — the model will

solve it in six months."

— Boris Cherny (via Noh Jungseok)Economic Impact Is Still Pending

A shared question from the Lex episode also remains: AI’s economic impact hasn’t actually shown up in GDP yet. Agents ordering chicken for you is convenient, but translating that into industry-restructuring economic change will take more time.

Cross-Cutting Takeaways

Placing these two videos side by side, three observations stood out.

First, AI outlook has a shorter shelf life now. Nathan’s “they all suck” was an accurate assessment in February. But seven weeks later, it was already incomplete. Even expert judgment needs updating within 8 weeks.

Second, reframing can move faster than technical breakthroughs. OpenClaw didn’t “solve” Computer Use. Screen recognition didn’t suddenly improve. Instead, it shifted from “AI must use computers from scratch” to “agents repeat defined tasks in human-configured environments” — bypassing existing limitations.

Third, researchers and practitioners point to the same place. Nathan’s “spec-driven design” and Noh Jungseok’s “data connectors + prompts + frontier model.” Sebastian’s “domain understanding is key” and Noh Jungseok’s “good prompts require domain knowledge, not engineering skill.” Different languages, same convergence point: models are already strong enough, and the bottleneck lies in the quality of specification connecting models to reality.

Noh Jungseok expressed this in his own terms:

"We're entering an era where every problem is converted into

a search problem using compute, and that compute solves the

problem instantly."

— Noh Jungseok (10:17)Nathan said something similar from a different angle:

"RLVR in real scientific domains — startups with wet labs

where they're having language models propose hypotheses

that are tested in the real world."

— Nathan Lambert (02:15:30)Those who can create verifiable reward signals have the advantage. In Noh Jungseok’s language: “environments that convert non-verifiable into verifiable.” In Nathan’s: “startups building RLVR environments.” Same structure.

OpenClaw became one example of such an “environment.” Community-built harnesses and plugins serve as reward environments. Agents perform tasks, success/failure feeds back immediately, and that feedback produces better harnesses. What Noh Jungseok called the “ouroboros” — models improve harnesses, harnesses improve data, data improves models. A self-reinforcing loop.

Eight weeks ago, this loop wasn’t visible. Now it’s running.

Videos analyzed in this post:

- Lex Fridman Podcast #490 “State of AI in 2026” (2026.02.01) — Sebastian Raschka, Nathan Lambert

- Noh Jungseok EP91 “AI from a Business Perspective, 26 Q1” (2026.03.21) — Noh Jungseok, Choi Seungjun

Related Posts

The Identity Track — From Proof of Personhood to AI Agent Delegation

Proof of personhood and AI agent identity are two layers of the same track. World ID, Passkey, DID adoption curves + Defakto, t54, Indicio funding + payment network entries — the entire identity track in one piece.

In the Headless Era, Where Does Pincered Value Collect? — Vertical Compression of the Builder Layer

The builder layer compresses vertically as hyperscalers and frontier labs squeeze from above and below. Value shifts to custom ops assembly and the defensibility conditions above it.

Will No-Code Get Eaten or Get More Precious? — Two Sides of the Code-Agent Era

Code costs trending to zero won't kill no-code. The cost of verifying, reproducing, and auditing outputs rises at the same time. The two stack as layers rather than competing as alternatives.