AI products are fundamentally different from traditional software. This post distills 12 counterintuitive lessons from 20 builders, the CC/CD framework for AI development lifecycles, and a 3-phase eval system — all sourced from Lenny Rachitsky's newsletter.

Why AI Products Are Fundamentally Different

Traditional software is deterministic — it either works or it doesn’t. AI products are probabilistic — the same input can produce different outputs. This difference breaks every assumption in product development.

According to Emergence Capital, 60% of companies have already integrated generative AI into their products. Yet 2 in 5 gen AI products still haven’t made a single dollar despite millions (or billions) in spending.

This post distills frameworks from three key pieces in Lenny Rachitsky’s newsletter:

- Counterintuitive Advice for Building AI Products (2024.07) — 12 lessons from 20 companies

- Why Your AI Product Needs a Different Development Lifecycle (2025.08) — The CC/CD framework

- Building Eval Systems That Improve Your AI Product (2025.09) — 3-phase eval playbook

12 Counterintuitive Lessons

| # | Conventional Wisdom | Counterintuitive Truth | Source |

|---|---|---|---|

| 1 | Start with user needs | Start with “what’s technically possible?” | Paul Adams, Intercom |

| 2 | Demo = Product | Watch for Phantom PMF | Joshua Xu, HeyGen |

| 3 | Model quality is key | UX, privacy, post-processing matter more | Ryan J. Salva, GitHub |

| 4 | Don’t label it “AI” | AI branding increases engagement AND comprehension | James Evans, CommandBar |

| 5 | Build big AI features | Small invisible AI is the fan favorite | Claire Vo, LaunchDarkly |

| 6 | Time in app = success | Best AI reduces time in app | Gaurav Misra, Captions |

| 7 | Start with easy problems | Startups are safer tackling hard problems | Sarah Guo, Conviction |

| 8 | Ship new features | Prompt improvement alone is often enough | Sherif Mansour, Atlassian |

| 9 | Quality over speed | Speed is the competitive advantage (pre-compute wins) | Rahul Vohra, Superhuman |

| 10 | Stack logic on models | Fine-tuning beats excessive scaffolding | Henri Liriani, Tome |

| 11 | Customization later | Users want customization from day one | Johnny Ho, Perplexity |

| 12 | Models are the moat | Data and interfaces matter more than models | Scott Belsky, Adobe |

Key quotes:

“Most people think about AI services in terms of model quality, but model quality is just a tiny piece. Post-processing filters, data privacy, feedback loops are all far more important.” — Ryan J. Salva, GitHub

“The smallest (and almost invisible) features are usually the fan favorites.” — Claire Vo, LaunchDarkly

GitHub Copilot’s acceptance rate is 35% — improving it came from UX optimization, not model swaps.

The CC/CD Framework

Traditional CI/CD can’t manage AI products. AI needs Continuous Calibration / Continuous Development (CC/CD).

graph TB

subgraph CD["Continuous Development"]

CD1["CD 1: Scope capability + curate data"]

CD2["CD 2: Set up application"]

CD3["CD 3: Design evals"]

CD1 --> CD2 --> CD3

end

CD3 --> DEPLOY["Deploy"]

subgraph CC["Continuous Calibration"]

CC4["CC 4: Run evals"]

CC5["CC 5: Analyze behavior + spot patterns"]

CC6["CC 6: Apply fixes"]

CC4 --> CC5 --> CC6

end

DEPLOY --> CC4

CC6 -->|"iterate"| CC4

CC6 -->|"major change"| CD1Agency should be granted incrementally — like onboarding a new teammate:

| Version | Control | Agency | Example (Marketing Assistant) |

|---|---|---|---|

| v1 | High | Low | Draft copy from prompts |

| v2 | Medium | Medium | Build multi-step campaigns |

| v3 | Low | High | Launch + A/B test + auto-optimize |

3-Phase Eval System

“The #1 misconception: ‘Can’t the AI just eval it?’ It doesn’t work.” — Hamel Husain

Phase 1: Error Analysis — Manually review ~100 interactions. Appoint a single domain expert (Benevolent Dictator), not a committee.

Phase 2: Build Eval Suite — Code-based evaluators for objective failures, LLM-as-a-Judge for subjective ones. Use binary pass/fail, not Likert scales. Measure TPR/TNR, not accuracy.

Phase 3: Operationalize — Safety Net (CI + golden dataset) prevents regression. Discovery Engine (production LLM-as-Judge, async) finds unknown unknowns.

Practical Checklist

The Make Me Unicorn AI Product Blueprint covers 45+ AI-specific items across model integration, cost control, prompt engineering, UX, data privacy, and monitoring.

pip install make-me-unicorn

mmu init && mmu scan

# See docs/blueprints/industry/ai-product.mdRelated posts in this series:

- How AI Frameworks Make Money

- How AI Observability Platforms Make Money

- The Code Was Done, But Everything Else Wasn’t

Sources

- Lenny Rachitsky & Kyle Poyar, “Counterintuitive advice for building AI products,” Lenny’s Newsletter, 2024-07-02

- Aishwarya Reganti & Kiriti Badam, “Why your AI product needs a different development lifecycle,” Lenny’s Newsletter, 2025-08-19

- Hamel Husain & Shreya Shankar, “Building eval systems that improve your AI product,” Lenny’s Newsletter, 2025-09-09

Related Posts

Anatomy of the AI Market in 3 Layers — What the $660B Really Looks Like

First installment of a series analyzing the AI market across three layers: infrastructure ($500B+), platform ($18B+), and applications ($80B+)

GEO SaaS Landscape — Profound, Scrunch, Peec and 10 More Players

Comparative analysis of 10 major GEO SaaS players (Profound, Scrunch, Peec, etc.) covering features, pricing, and targets to derive WICHI's strategic positioning.

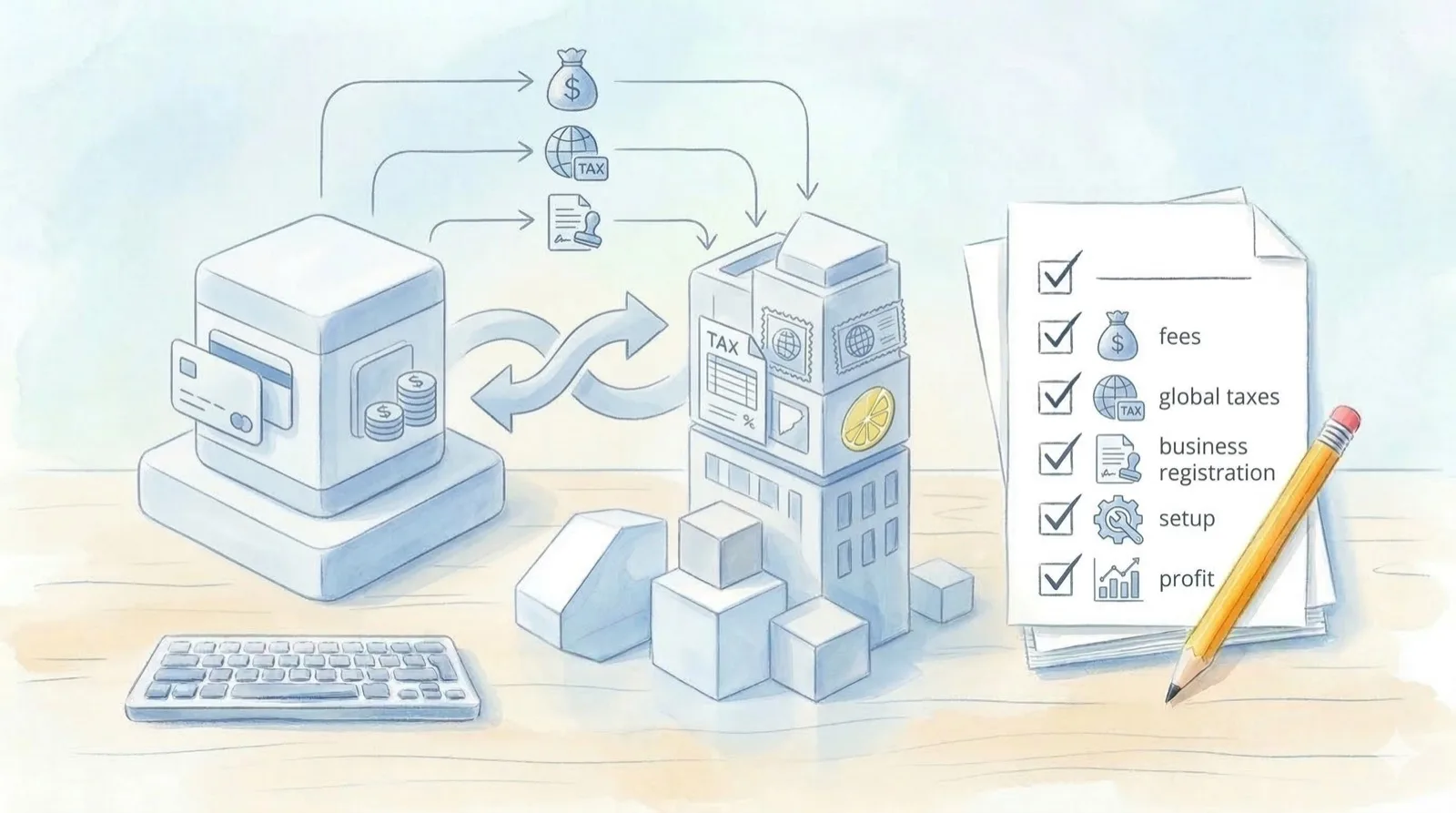

Lemon Squeezy vs Stripe — A Korean Solo SaaS Builder's Comparison

Comparative analysis of Lemon Squeezy (MoR) and Stripe for Korean solo SaaS builders, providing a practical guide on global tax compliance, fees, and business registration.