Overview

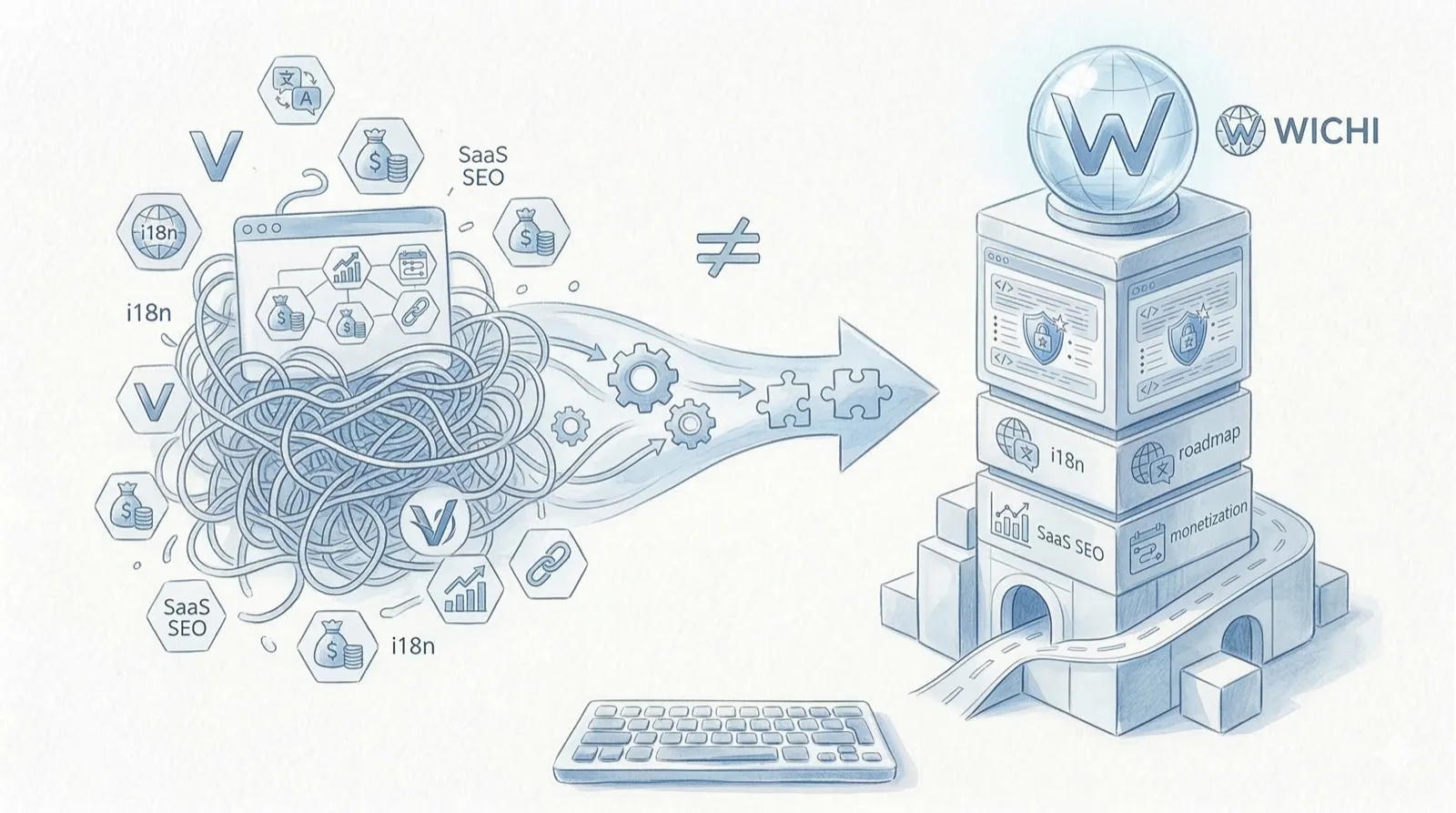

The WICHI MVP, built in 3 days at a hackathon, was in a state of “it works, but it’s not ready to charge money.” The core analysis functionality ran, and the signup-payment-analysis-report flow was connected. But transitioning to a service that actually accepts payments required filling a substantial number of gaps.

This post catalogs the changes made during the transition from prototype to commercial service, organized by category. For each item, I document the prototype state, what changed, and why. It intentionally excludes specific implementation code or cost figures. The focus is on what changed and the reasoning behind each change.

Full Change Category List

| # | Category | Prototype State | Production State | Priority |

|---|---|---|---|---|

| 1 | Security hardening | Basic input limits only | Multi-layer validation + concurrency limits | P0 |

| 2 | Authentication | Basic session-based | JWT + token refresh | P0 |

| 3 | Internationalization (i18n) | English only | KO/EN dual language | P0 |

| 4 | Sample system | None | Locale-specific sample reports | P1 |

| 5 | Payment integration | Semi-manual | Webhook automation + credit system | P0 |

| 6 | Error handling & monitoring | console.log level | Structured errors + GA4 | P1 |

| 7 | DB schema | Minimal table structure | Normalization + indexing | P1 |

| 8 | Frontend hosting | No-code builder | Vercel static hosting | P0 |

| 9 | DNS / SSL / custom domain | Builder default domain | Custom domain + SSL | P0 |

| 10 | SEO / OG images | None | Search Console + meta + OG | P1 |

graph LR

subgraph "Prototype"

A[FE: No-code Builder] --> B[BE: Basic API]

B --> C[DB: Minimal Schema]

B --> D[LLM API]

end

subgraph "Production"

E[FE: Vercel + React] --> F[BE: FastAPI + JWT + Rate Limit]

F --> G[DB: Normalized + Indexed]

F --> H[LLM API]

F --> I[Payment Webhooks]

F --> J[GA4 Events]

E --> K[i18n KO/EN]

E --> L[Sample System]

E --> M[SEO + OG]

end1. Security Hardening

Prototype State

Only frontend input length limits were in place. The backend had no separate validation logic and processed request bodies as-is. There was no defensive code assuming malicious input. For a hackathon MVP, this was sufficient — the users were a handful of judges with no motivation for malicious use.

Changes

Security hardening was applied across three layers.

Layer 1: Frontend UX Validation

- Input format validation (URL patterns, email format, etc.)

- Input length limits (min/max)

- Immediate guidance messages for special characters and disallowed strings

- Clear conditions for submit button deactivation

Frontend validation is UX, not security. Its role is to explain to users “why this input isn’t accepted.” Actual defense happens on the backend.

Layer 2: Backend Allowlist Validation

- Allowlist-based validation that only permits known-good values

- Enum-type parameters compared against a defined value list

- String parameters go through pattern matching before being accepted

- Disallowed values result in immediate request rejection (400 response)

Why allowlist over denylist: A denylist blocks only “known bad inputs,” leaving it vulnerable to novel attack patterns. An allowlist passes only “known good inputs,” making it safe by default.

Layer 3: Forbidden Pattern Filtering

- Prompt injection attempt detection and blocking

- System prompt exfiltration attempt blocking

- Filtering of unintended special character combinations

- Forbidden pattern list managed in a separate config file (not hardcoded)

Additional Defense: Concurrency Limits

- Per-user cap on concurrent analysis requests

- Queue notification or request rejection when limit is exceeded

- Credit deduction is only finalized after successful analysis (recovered on failure)

| Layer | Location | Role | Blocking Point |

|---|---|---|---|

| 1 | Frontend | UX guidance | At input time |

| 2 | Backend entry | Allowlist validation | On request receipt |

| 3 | Backend processing | Forbidden pattern detection | Before processing |

| Additional | Backend | Concurrency limits | At execution time |

graph TD

A[User Input] --> B{FE Format Validation}

B -->|Pass| C[API Request]

B -->|Fail| D[Input Error Guidance]

C --> E{BE Allowlist Validation}

E -->|Pass| F{Forbidden Pattern Filter}

E -->|Fail| G[400 Bad Request]

F -->|Pass| H{Concurrency Limit Check}

F -->|Fail| I[403 Forbidden]

H -->|Available| J[Execute Analysis]

H -->|Exceeded| K[429 Too Many Requests]

J --> L{Analysis Successful?}

L -->|Success| M[Finalize Credit Deduction]

L -->|Failure| N[Recover Credits]Why This Changed

The moment payments are attached, malicious use has financial motivation. With a free MVP, someone entering garbage input causes minimal damage. With a paid service, credit abuse, API exploitation, and prompt injection translate directly into costs. Each LLM API call has a per-call cost, so malicious use directly increases operating expenses.

Security is not “something to do later” — it becomes mandatory the moment payment functionality is added.

2. Authentication

Prototype State

Basic session-based authentication was in use. Sessions persisted after login with no consideration for token expiration or refresh. CORS was set to allow everything (*) for development convenience.

Changes

JWT-Based Authentication

- Separated access tokens and refresh tokens

- Short expiration time on access tokens

- Automatic refresh via refresh tokens

- User role and permission information included in token payload

CORS Hardening

- Removed wildcard (

*) permission - Explicitly specified allowed domains (production domain, preview domain)

- Explicitly restricted allowed methods and headers

- Enabled Credentials option

Rate Limiting

- Dual-layer limiting: IP-based + user-based

- Per-endpoint differentiated limits (authentication endpoints are stricter)

- 429 response on limit breach with retry-after timing

| Item | Prototype | Production |

|---|---|---|

| Auth method | Session-based | JWT (access + refresh) |

| Token expiration | Not considered | Short expiry + auto-refresh |

| CORS | * (allow all) | Explicit domain specification |

| Rate limit | None | Dual-layer IP + user |

| Permission management | None | Roles included in token payload |

Why This Changed

Session-based authentication is simple in a single-server environment, but has limitations in a SaaS architecture where frontend and backend run on different domains. JWT is stateless, making it favorable for server scaling, and allows the frontend to manage tokens directly — well-suited for SPA architectures. CORS and rate limiting are baseline defenses for any service exposing an API externally.

3. Internationalization (i18n)

Prototype State

English only. All UI text was written directly in component code. Button text, guidance messages, and error messages were hardcoded as English strings.

Changes

Frontend Changes

- Separated UI text from code into per-locale JSON files

- Added language toggle UI (KO/EN toggle in header)

- Automatic language detection based on browser locale

- Manual selection saved to local storage

- Date and number formatting adapted per locale

- Locale prefix applied to links and routing paths

Backend Changes

- Analysis report generation branches prompts based on request locale

- System prompts sent to the LLM include language instructions

- API error messages branch by locale

- Email notification templates separated by locale

Translation Management

- Established naming conventions for translation keys (e.g.,

page.analysis.button.start) - Fallback to English when a key is missing

- Maintained a translation file update checklist

| Area | Prototype | Production |

|---|---|---|

| UI text | Hardcoded in components | Separated into per-locale JSON |

| Report content | English only | Branches by request locale |

| Error messages | English only | Per-locale branching |

| Date/number formatting | US format only | Locale-based formatting |

| Routing | Single path | Locale prefix |

| Detection | None | Browser locale auto-detection |

Why This Changed

WICHI is a global SaaS. To enter the Korean market and expand globally at the same time, i18n is not optional — it’s mandatory.

Adding i18n later means ripping apart every UI component. The work of finding hardcoded strings one by one and replacing them with translation keys grows exponentially as the component count increases. The commercialization milestone was the most efficient time to establish the structure.

i18n is a “structure” problem, not a “translation” problem. Separating text from code is the core task — actual translation comes after.

Backend i18n has more considerations than frontend. In an LLM-based service, the analysis output itself must change language, requiring language instructions at the prompt level. This is a fundamentally different scope of work from simply translating UI.

4. Sample System

Prototype State

No sample data existed. Users had to spend credits after signing up to see any analysis results. The structure required payment before users could verify “what kind of results does this service produce?”

Changes

Sample Report System

- Sample reports accessible without login

- English samples: based on a global DTC brand

- Korean samples: based on a major Korean brand

- Sample reports rendered using the same UI as real analysis results

Conversion Funnel Design

- Signup gate placed at the end of sample reports

- Only a certain percentage of the full report is viewable for free

- Gate messaging branches by locale

- GA4 tracking on sample-to-signup conversion events

Sample Selection Criteria

- Selected brands with high recognition for the relevant locale’s users

- Selected brands that produce rich analysis results (thin results have the opposite effect)

- Periodic sample data refresh (outdated data undermines credibility)

| Item | Prototype | Production |

|---|---|---|

| Output preview | Not possible | Available via sample reports |

| Pre-login access | Not possible | Available for samples |

| Locale-specific content | N/A | Market-appropriate brands per locale |

| Conversion funnel | None | Signup gate + GA4 events |

Why This Changed

“I want to see what I’m getting before I pay” is a natural impulse. Especially with a SaaS delivering “analysis reports” — a text-based output — there’s an inherent perception that quality can vary widely. Requiring payment first, with no samples, makes conversion nearly impossible.

The reason for locale-specific brand samples is equally clear. Showing US brand samples to a Korean user leaves the question “does this even apply to our market?” You need to show analysis results for brands that users in each market personally recognize to convey the value of the output.

Samples are not “demos” — they’re “evidence.” They prove to users that “this service delivers results worth paying for.”

5. Payment Integration

Prototype State

A payment page existed, but webhook handling was incomplete. Code to receive payment success events was in place, but there was no handling for edge cases — duplicate events, retry after failure, refunds. Credit allocation was done manually.

Changes

Webhook Automation

- Receive and verify webhook event signatures from the payment platform

- Route to handlers by event type (payment complete, refund, subscription change, etc.)

- Idempotency guarantee — duplicate receipt of the same event is processed only once

- Retry resilience — duplicate prevention even on retries after receipt failure

- All received webhook events logged (for debugging and audit trail)

Credit System Design

- Three-stage flow: payment complete → credit charge → deduction on analysis

- Credit balance query API

- Credit deduction is reserved at analysis start, confirmed on completion

- Automatic recovery of reserved credits on analysis failure

- Credit usage history (charge, deduction, recovery records)

Order State Management

- Order state machine: payment pending → payment complete → credits granted → (on refund) credits recovered

- Timestamp recorded for each state transition

- Alerts on abnormal state transitions

stateDiagram-v2

[*] --> PaymentPending: Enter payment page

PaymentPending --> PaymentComplete: Webhook received (payment success)

PaymentPending --> PaymentFailed: Webhook received (payment failure)

PaymentComplete --> CreditsGranted: Credit charge processed

CreditsGranted --> [*]

PaymentComplete --> RefundRequested: User requests refund

RefundRequested --> CreditsRecovered: Check remaining credits and recover

CreditsRecovered --> RefundComplete: Refund processing complete

RefundComplete --> [*]

PaymentFailed --> [*]Defensive Logic

- Server-side re-verification of payment amount and product info (client-submitted values are never trusted)

- Detection and blocking of duplicate payments from the same user in rapid succession

- Constraints preventing credit balance from going negative

Why This Changed

In an MVP, “payments kind of work” is sufficient. In a commercial service, it’s not. If credits aren’t reflected immediately after payment, users experience “I paid money but can’t use the service.” If duplicate deductions occur, trust is destroyed.

Webhook-based automation and transaction safety are baseline requirements for any paid service. The reserve-confirm pattern for credit deduction was especially essential since analysis depends on LLM API calls, which always carry a possibility of failure.

In a payment system, “it works most of the time” is not good enough. A single edge case is directly tied to a user’s money.

6. Error Handling and Monitoring

Prototype State

Error handling was at the console.log level. When errors occurred, they appeared in the browser console or as stack traces in backend logs. User-facing error messages were generic “Something went wrong” text. No user behavior data was being collected.

Changes

Frontend Error Handling

- Error Boundaries applied — component errors are isolated so they don’t crash the entire app

- User-friendly messages displayed on API request failure (branching by locale)

- Differentiated guidance for network errors vs. server errors

- Retry buttons provided for retryable errors

Backend Error Handling

- Unified structured error response format (

code,message,detailfields) - Accurate HTTP status code usage (previously most errors returned 500)

- Clear error level classification: user input errors (4xx) vs. server errors (5xx)

- Retry logic with exponential backoff for LLM API call failures

- Analysis failure processing + credit recovery after retry exhaustion

Monitoring — GA4 Integration

- Key conversion events defined and tracked:

| Event | Trigger Point | Purpose |

|---|---|---|

sign_up | Signup completion | Measure signup conversion rate |

login | Login | Identify return visit patterns |

view_sample | Sample report view | Measure sample effectiveness |

begin_checkout | Payment page entry | Checkout funnel start point |

purchase | Payment completion | Measure payment conversion rate |

begin_analysis | Analysis start | Credit usage patterns |

analysis_complete | Analysis completion | Analysis success rate |

analysis_failed | Analysis failure | Failure rate monitoring |

Structured Logging

- Request ID-based tracing — a single request can be tracked across multiple services

- Systematized log levels (DEBUG, INFO, WARNING, ERROR, CRITICAL)

- Sensitive data (tokens, passwords, API keys) masked in logs

Why This Changed

You can’t improve without data. You need to know where users drop off, what the payment conversion rate is, and what percentage of users who start an analysis actually complete it — only then can you set the next priorities.

Error handling is the same story. “Something went wrong” is useless to both users and developers. Users need to know “what went wrong and what they should do.” Developers need “where and why it failed” recorded in a structured format.

7. Database Schema Evolution

Prototype State

Only minimal tables existed — a users table and an analysis results table, essentially. Normalization hadn’t been done. No indexes existed beyond primary keys, and there was no consideration for query performance.

Changes

Table Additions and Normalization

- New credit transaction table (charge, deduction, recovery history)

- New order table (payment state machine)

- New sample report table

- User settings table separated (locale, notification preferences, etc.)

- Analysis results table restructured (locale column added, metadata normalized)

Indexing

- Indexes added for frequently accessed query patterns

- Credit balance query optimized (user ID + creation date composite index)

- Analysis result list query optimized (user ID + status + creation date)

- Eliminated unnecessary full table scans

Data Integrity

- Foreign key constraints added

- CHECK constraint to prevent negative credit balances

- Triggers or application-level validation for state transitions

- Soft delete pattern applied (preventing accidental data loss)

| Item | Prototype | Production |

|---|---|---|

| Table count | 2-3 | 7+ |

| Indexes | PK only | Composite indexes based on query patterns |

| Foreign keys | None | Applied to all relationships |

| Data integrity | Application-level only | DB-level constraints + app-level validation |

| Deletion approach | Hard delete | Soft delete |

Why This Changed

In a prototype, the goal is “data gets saved.” In a commercial service, the goal is “data is accurate, queryable fast, and not accidentally lost.”

The addition of the credit system in particular made transaction consistency critical. If credit deduction and analysis execution aren’t processed atomically, you get situations where credits are deducted but the analysis fails. DB-level constraints are the last safety net against such scenarios.

8. Frontend Hosting

Prototype State

The frontend was hosted on a no-code builder. It was fast for building UI during the hackathon, but had limits on customization and cost control at the commercialization stage.

Changes

- Fully migrated from no-code builder to code-based frontend (React + Vite)

- Switched to Vercel-based static hosting

- Built git-push-based automatic deployment pipeline

- Automatic Preview Deploy created for each PR

- Instant rollback to previous deployment versions

- Environment variables separated into Production / Preview / Development

- All builder-specific dependencies completely removed

| Item | No-code Builder | Vercel |

|---|---|---|

| Deploy trigger | Manual from editor | git push → automatic |

| PR preview | Not available | Preview Deploy per PR |

| Rollback | Manual restore | Instant rollback |

| Environment variables | Limited | Separated by environment |

| Code control | Partial | Full |

| Monthly cost | Paid subscription | Free tier |

Why This Changed

Vercel’s free tier was more economical than the no-code builder’s monthly subscription. More importantly, direct control over frontend code was required to implement i18n, SEO, custom components, and other features necessary for commercialization. Prototyping tools and production hosting serve different roles.

9. DNS / SSL / Custom Domain

Prototype State

Using the default subdomain provided by the no-code builder. SSL was auto-provisioned by the builder. No custom domain was connected.

Changes

Custom Domain Setup

- Purchased and registered a production domain

- Configured nameserver records in Cloudflare DNS

- Connected custom domains to both frontend (Vercel) and backend (Railway)

- Set up www-to-root domain redirect

SSL Certificates

- Vercel: Automatic SSL provisioning (Let’s Encrypt)

- Backend: Railway automatic SSL

- Certificate renewal is auto-managed on both sides

DNS Record Cleanup

- Accurate A record and CNAME record configuration

- Removed unnecessary legacy records

- Set up redirect from previous domain after DNS propagation confirmation

| Item | Prototype | Production |

|---|---|---|

| Domain | Builder subdomain | Custom domain |

| SSL | Builder auto-provision | Auto-provisioning on both sides |

| DNS management | N/A | Cloudflare |

| www redirect | N/A | Configured |

Why This Changed

A subdomain like something.builder-name.app is fine for a prototype, but undermines trust for a paid service. A custom domain is the baseline of brand identity, and SSL is the baseline of user data protection. For a service handling payment information, neither is negotiable.

10. SEO / OG Images

Prototype State

Zero SEO presence. No robots.txt, no sitemap.xml. Meta tags were at defaults, and Open Graph images were not configured. The service had never been registered with any search engine.

Changes

Search Engine Registration

- Registered domain property in Google Search Console

- Ownership verified via DNS TXT record

- Auto-generated and submitted

sitemap.xml - Configured

robots.txt(explicitly specifying crawlable paths)

Meta Tag Optimization

- Per-page

titleanddescriptionoptimization - Added structured data (JSON-LD) with SoftwareApplication schema

- Canonical URL configuration (preventing duplicate content)

- Per-locale

hreflangtags (multilingual search exposure)

Open Graph Images

- Created a main service OG image

- OG image branching by key pages

- OG images stored within the project (removed external CDN dependency)

- Twitter Card meta tags also configured

Technical SEO

- Page load speed optimized (a side benefit of the static hosting migration)

- Mobile responsiveness verified

- Core Web Vitals baseline achieved

graph TD

A[Search Engine Crawler] --> B[Check robots.txt]

B --> C[Parse sitemap.xml]

C --> D[Crawl Pages]

D --> E[Collect Meta Tags]

D --> F[Collect Structured Data]

D --> G[Check hreflang]

E --> H[Display in Search Results]

F --> H

G --> I[Branch Search Results by Locale]

J[Social Share] --> K[Parse OG Meta Tags]

K --> L[Display OG Image + Title + Description]Why This Changed

For users to find a paid SaaS, it needs to be discoverable in search. For an early-stage SaaS starting without ad spend, organic search is effectively the only free acquisition channel. Without SEO, even the best service remains invisible.

OG images determine the first impression when links are shared socially. The difference between a cleanly displayed title, description, and image versus a blank preview directly affects click-through rates.

Prioritization Criteria

Since all 10 categories can’t be tackled simultaneously, priorities had to be set. P0 and P1 were divided using the following criteria.

P0 (Must-have before launch — minimum requirements to accept payments)

- Security hardening: security is non-optional when payments are involved

- Authentication: APIs cannot be protected without JWT

- Payment integration: manual credit allocation doesn’t scale

- Frontend hosting: i18n and SEO can’t be implemented without code control

- DNS/SSL/domain: a paid service can’t operate on a builder subdomain

- i18n: essential for Korean market entry; retrofitting later is exponentially more expensive

P1 (Must-have post-launch — requirements for growth)

- Sample system: directly impacts conversion rate

- Error handling/monitoring: improvement is impossible without data

- DB schema refinement: data integrity and performance

- SEO/OG images: establishing an acquisition channel

graph TD

subgraph "P0: Required Before Payments"

A[Security Hardening] --> B[Authentication]

B --> C[Payment Integration]

D[FE Hosting Migration] --> E[DNS/SSL/Domain]

D --> F[i18n]

end

subgraph "P1: Required for Growth"

G[Sample System]

H[Error Handling/Monitoring]

I[DB Schema Refinement]

J[SEO/OG Images]

end

C --> G

F --> G

E --> J

B --> H

C --> I| Priority | Criterion | Categories |

|---|---|---|

| P0 | Cannot accept payments without this | Security, Auth, Payments, Hosting, DNS/SSL, i18n |

| P1 | Cannot grow without this | Samples, Monitoring, DB refinement, SEO |

| P2 | Nice-to-have but launchable without | (Items not covered in this post) |

Full Summary

| # | Area | Prototype | Production | Priority |

|---|---|---|---|---|

| 1 | Security | Basic input limits | 3-layer validation + concurrency limits + transaction safety | P0 |

| 2 | Auth | Session-based | JWT + CORS + Rate Limit | P0 |

| 3 | i18n | English only | KO/EN dual language (FE + BE) | P0 |

| 4 | Samples | None | Locale-specific sample reports + signup gate | P1 |

| 5 | Payments | Semi-manual | Webhook automation + credit system | P0 |

| 6 | Errors/Monitoring | console.log | Structured errors + GA4 with 8 events | P1 |

| 7 | DB | Minimal tables | Normalization + indexing + integrity constraints | P1 |

| 8 | Hosting | No-code builder | Vercel static hosting | P0 |

| 9 | DNS/SSL | Builder subdomain | Custom domain + auto SSL | P0 |

| 10 | SEO | None | Search Console + sitemap + OG images | P1 |

Retrospective

These 10 items were not things “missing from the MVP.” They were things that weren’t needed at the prototype stage.

The purpose of a prototype is to validate core value. The only question was “is this analysis useful?” — and for that purpose, the MVP was sufficient.

The purpose of commercialization is to deliver that value reliably, repeatedly, and trustworthily. All 10 items above fall under the latter.

A prototype answers “is this possible?” A commercial service answers “can this be trusted?”

Patterns observed during the transition:

- The moment payments attach, the standard for everything changes. Security, error handling, data integrity — items that were “nice-to-have” when free become “must-have” when paid.

- i18n is a structural problem. It’s about separating text, not translating. Do it when your component count is small.

- Samples are trust devices, not marketing. Showing users “what this service delivers” is not a sales tactic — it’s basic respect for the user.

- Without monitoring, improvement relies on intuition. You need data to set priorities.

- DB constraints are the last line of defense. Even when application code has bugs, DB-level constraints protect data integrity.

Items not covered in this post — blog, email marketing, A/B testing, advanced analysis features, and more — will be documented separately in future posts.

Related Posts

Jocoding Hackathon Build Log — Building a GEO SaaS in 3 Days

Recording the 3-day MVP build for WICHI during the Jocoding Hackathon, covering tech stack choices (FastAPI, React, Supabase) and priorities for creating a 'working product' under tight deadlines.

Six GEO Business Opportunities and WICHI's Choice

Strategic analysis of three opportunity factors in the AI search (GEO) market and why WICHI chose 'SaaS-based monitoring' over advertising or agency models.

After Hackathon Rejection — Pivoting to Independent SaaS

Recording the 24-hour pivot of WICHI to an independent SaaS after a hackathon rejection, covering i18n implementation, SEO setup, and monetization roadmap restructuring.